AI is Stealing the Variance from Our Classrooms

There is a vital developmental difference between how adults and children interact with generative AI. Adults experience cognitive atrophy — the slow degradation of an already established skill from disuse. Children under 25 face cognitive foreclosure — the closing of the developmental door, preventing the foundational capacity for critical thinking from ever being built in the first place.

The Standardization of Imagination

I was talking to a high school health teacher recently. He'd been thinking about how to design a AI fool-proof assessment his students couldn't outsource to ChatGPT. Many of his colleagues are frustrated with essays. His idea was clever. Write a fiction story as a metaphor for the process of childbirth.

None of his students had given birth. The prompt required imagination, not retrieval. He expected variance. Some would be ridiculous. Some would be poetic. Some would miss the point entirely. That range would be what made the assignment alive.

A few weeks later he started reading the stories. A large majority were the same Mission Impossible style hero's journey.

Everything was normal at the start of the day. Then...something happened, symbolic of the contractions. Then it became an action movie, the hero pushing through escalating obstacles, until the final breakthrough at the end. Same arc. Same beats. Same emotional structure.

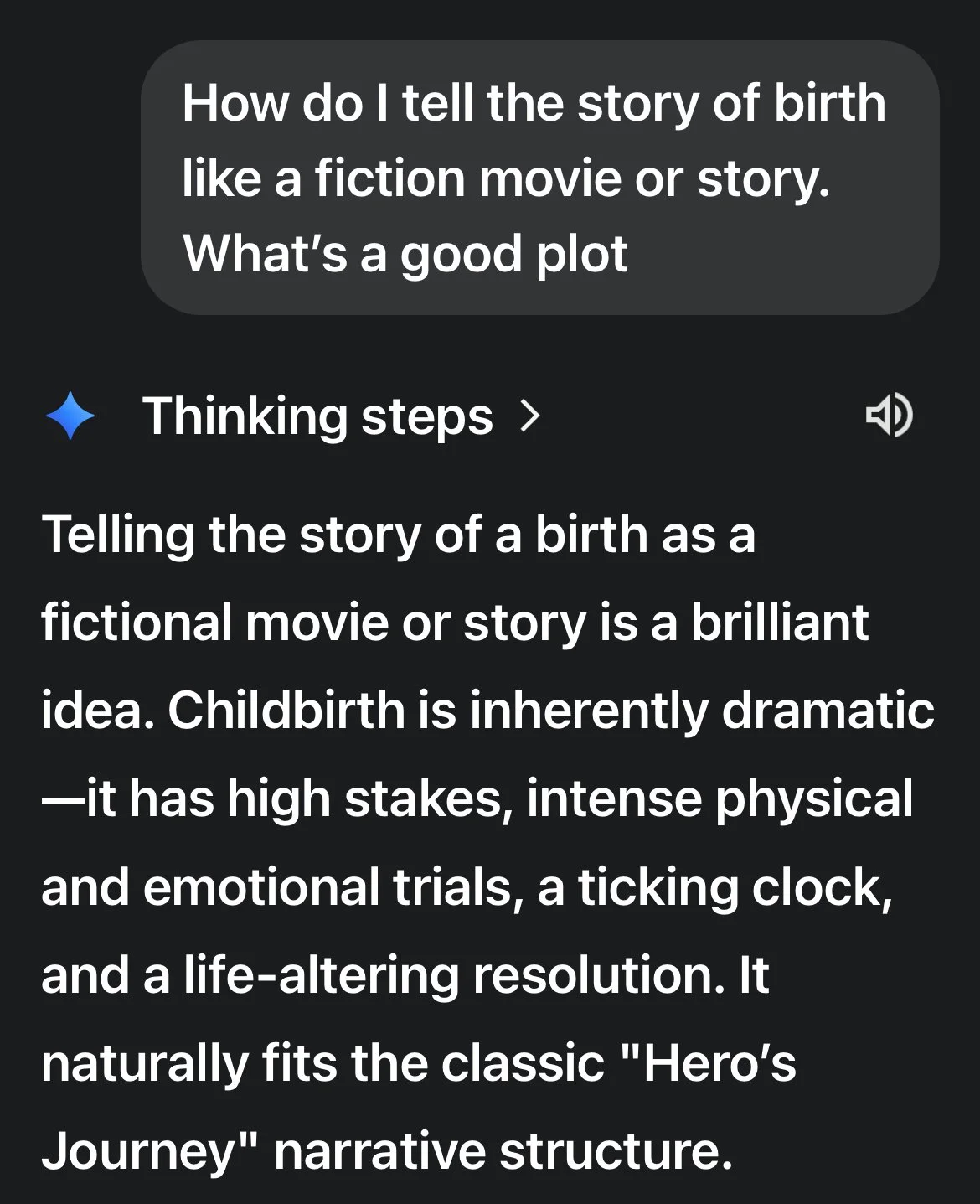

When he told me this, I was skeptical. So I opened Gemini on my phone and put in a prompt myself. The first option it gave me was the hero's journey.

results may vary depending on prompt

What shocked him wasn't the shortcut to a LLM. It was the absence of variety. The whole point of the assignment was to surface what these students would imagine when no one had told them what to imagine. Instead, he got the model's imagination, a dozen times over.

This year, kids were trending towards an average. Polished, competent, and functionally identical. This is what's getting lost. Not the quality of individual outputs (those might actually be higher on average). There was a lack of variance.

There always had been confused students who came to class with a completely wrong interpretation of supply and demand. Maybe they thought prices go up when demand drops. That student used to start a conversation. The teacher would ask, "why?" Other students would push back. The wrong answer became the most valuable moment in the lesson.

Convergence through a LLM arrives with the right answer. Or at least the average answer. The machine's answer. There's nothing to push against because there's no variation to explore. The bad takes were where the learning happened. Now there are less bad takes.

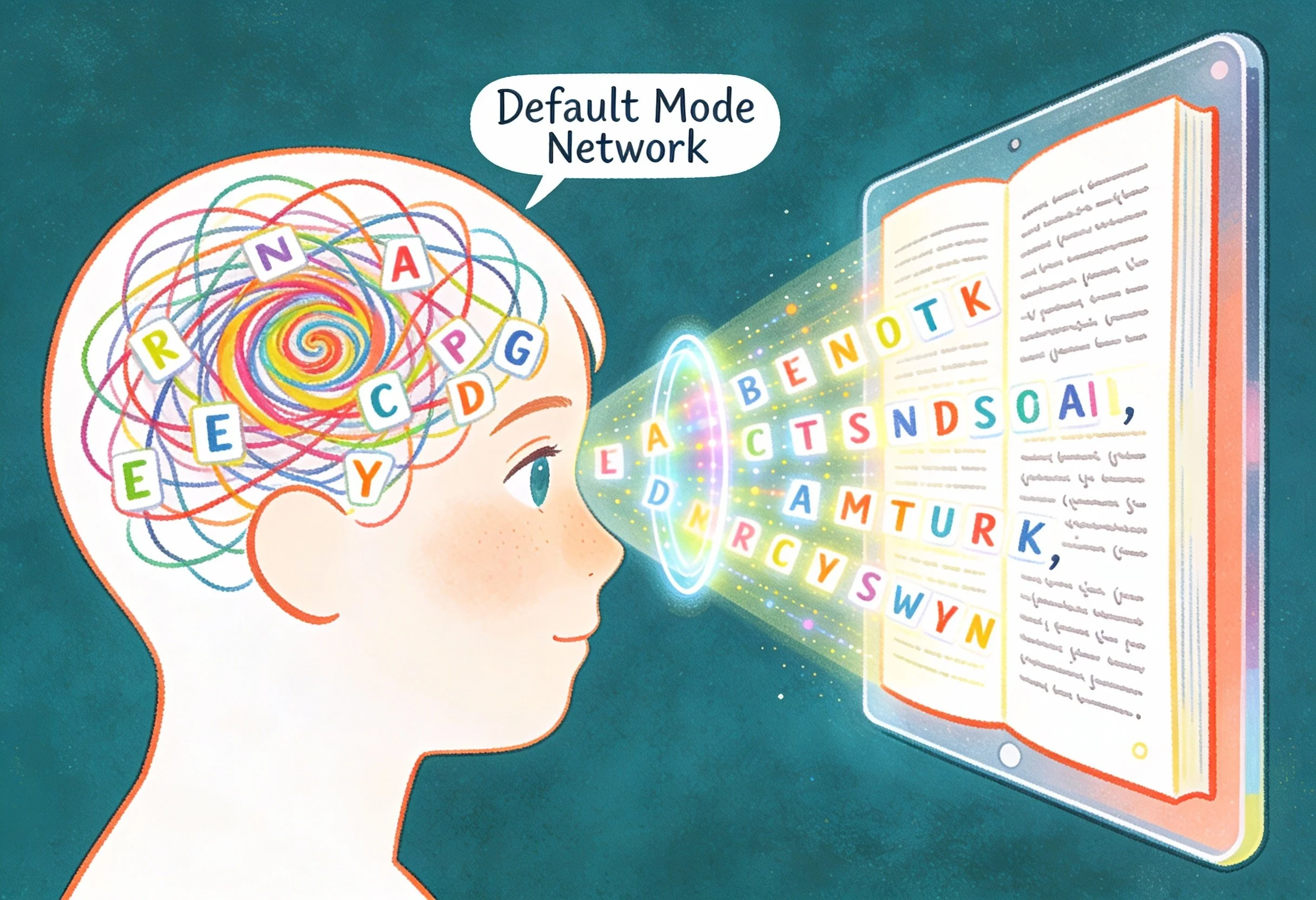

Cognitive Atrophy vs. Cognitive Foreclosure

Here's where I've landed on this.

Adults who offload cognitive tasks to AI are making a choice. I do it myself. When I ask an LLM to help me restructure a paragraph, I'm choosing to hand off a task I already know how to do. If I stopped using it tomorrow, the skill would still be there. Maybe a little rusty. But the foundation exists. I'm choosing that trade-off and recognizing the consequences.

Children don't have that foundation yet. Gerlich's 2025 study found strong negative correlations between AI usage and critical thinking scores. The numbers aren't subtle: r = -0.68 between AI use and critical thinking, r = +0.72 between AI use and cognitive offloading. The age pattern matters. Participants aged 17-25 showed the highest AI dependence and the lowest critical thinking scores. Participants over 46 showed the opposite.

Adults are experiencing atrophy. The skill degrades from disuse. It's real, but theoretically reversible because the foundation was built. Children under 25 (whose prefrontal cortices haven't finished developing, the region responsible for judgment and decision-making) aren't offloading a skill. They're closing the door to building it in the first place.

There's a difference between choosing not to do something you can do and never developing the capacity in the first place. AI companies don't make this distinction because both populations look the same in their usage data. But developmentally, they're not the same at all.

Why Gen Z Knows Something is Wrong

I'm convinced this isn't adult anxiety projected onto children. Children are sensing it themselves.

A Gallup survey released this week found that Gen Z's feelings about AI are souring fast. The percentage of 14-to-29-year-olds who feel hopeful about AI dropped from 27% to 18% in a single year. Nearly a third now say the technology makes them feel angry.

The researchers were surprised by how quickly attitudes shifted. But the reason is telling: respondents said they were concerned about how AI would affect their creativity and critical thinking skills. Not jobs. Not privacy. Creativity and critical thinking. The capacities themselves.

One respondent, Abigail Hackett (27, working in tourism near Anchorage), put it this way: "I still feel hesitant in using it to draft my communications to other people, just because I think some of those things are very human, and I'd like to keep them that way." She said she doesn't want her "social muscles to atrophy." She used the word atrophy. Without prompting. Without reading the research. She's feeling it.

The youngest respondents (those still in high school) were the most frequent AI users. They were also the ones predicting they'd need AI fluency for their careers. They're caught between knowing something is being lost and believing they have no choice but to keep using the tools anyway.

That's the trap. Use the tools or fall behind. But using the tools forecloses on the capacities you'd need to not need them.

What Authentic Student Agency Actually Sounds Like

I teach third grade in Amman, Jordan. My students don't use AI tools directly because they are eight. But I've been running an experiment that shows what happens when kids maintain ownership over the thinking process.

A group of students asked if they could make their own math game. Multiplication and division fluency, the kind of practice that usually lives inside gamified apps where the learning is thin and the dopamine hits are frequent. I said yes, but with conditions.

The students went into a room with a recorder. Their job was to have a conversation about the game they envisioned. What are the rules? What are the constraints? What's the reward structure? They had to talk it out between themselves. No screens.

I transcribed that conversation. I fed the transcript into an LLM. Not to design the game, but to generate follow-up questions the students hadn't considered. Design decisions they'd need to make before any code could be written.

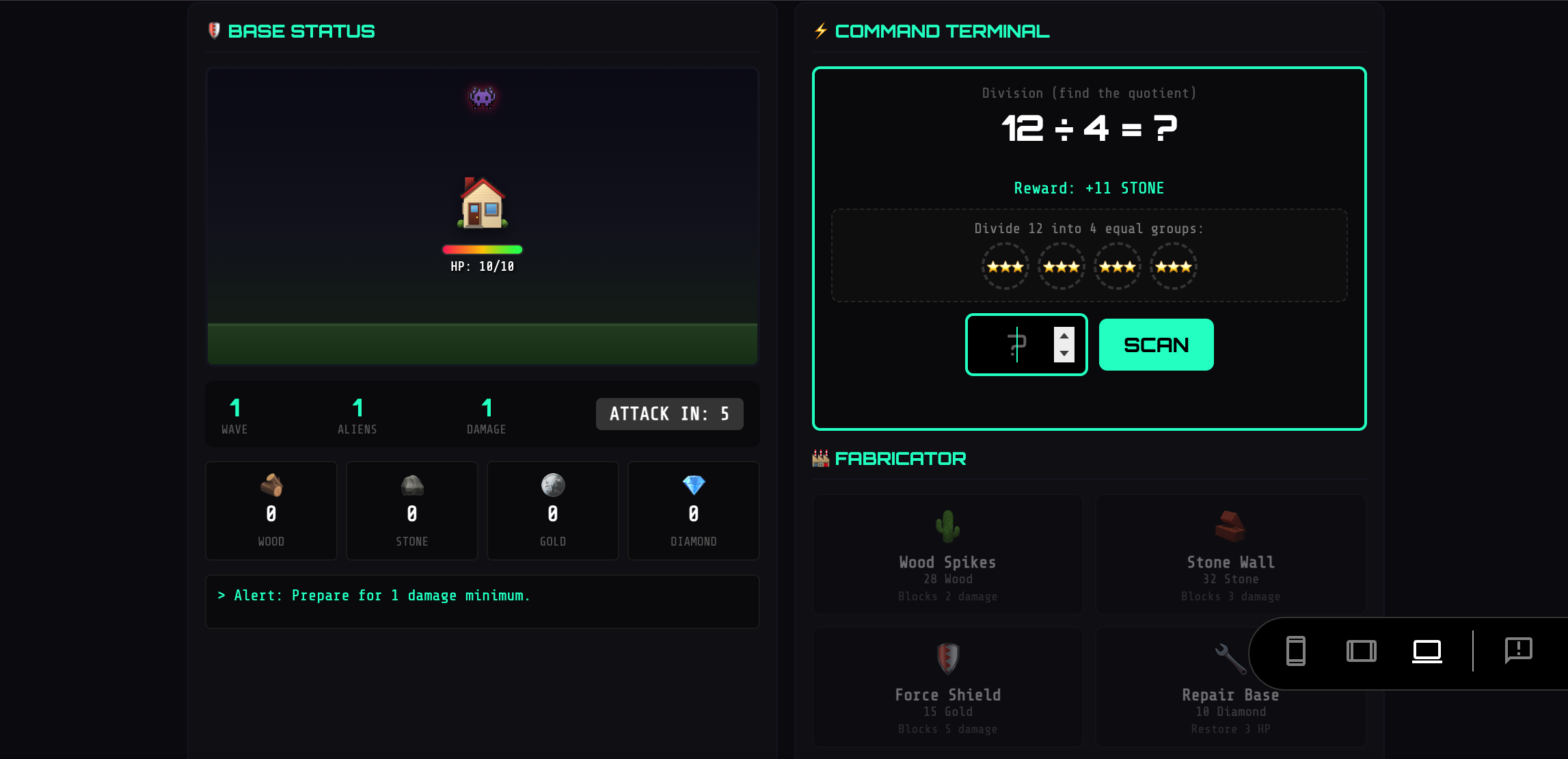

One pair designed a survival math game. Aliens attack after each math problem. You earn resources (wood, stone, gold) by solving questions correctly. You use resources to build defenses. Spikes. Walls. Bunkers.

Alien Survival Island

The AI-generated questions assumed the game's math was broken. If an alien spawns every turn but it takes seven turns to earn enough wood for one spike, the player loses immediately. How do you fix that?

The student's response: "No, no, no, no. Every question you get seven wood and that is enough to craft four spikes." He'd already solved the problem. The machine hadn't understood his system. He corrected it.

This is what agency sounds like. An eight-year-old pushing back on a generated assumption because he understood his own design better than the tool did. He wasn't verifying plausible output. He was defending a system he built himself.

The students did the thinking. The AI did the coding. That's the right division of labor.

If you skip the confusion or the bad interpretations. If you skip the friction that would have forced you to wrestle with the material or concept. What you get isn't your own thinking. It's AI's thinking with your name on it.

For adults, that's a choice with potentially recoverable consequences. For children still building the cognitive architecture that will serve them for the rest of their lives, it's something else. The door doesn't just close slowly. Once foreclosed, there's no evidence it reopens.

Thank you for caring." For ICT Educator Achille Kalinda, a first-grader's words revealed the true power of EdTech. In this piece, he explores why multimodal storytelling is essential for helping six-year-olds discover they have something worth saying when writing alone creates barriers.