Is Anyone Still Thinking For Themselves? (Published by Frankfurter Allgemeine Zeitung)

The question of whether Artificial Intelligence makes us stupid cannot be answered yet. It is time to consider what happens to a society that offloads thinking to machines.

By Piotr Heller - Translated by Gemini to English

Something has shifted in schools, says Timothy Cook. If teachers had asked their students to engage with a text a few years ago, the results were often surprising. "You would get back a variety of different thoughts." Today, that is no longer the case. Students all come up with nearly identical ideas and bullet points. "Every teacher I speak to tells me the same thing," says Cook.

For Cook, the cause is obvious: generative AI, such as ChatGPT and its ilk, is to blame. Students no longer think for themselves; they let the AI do their homework. This has become commonplace. Just last week, the American think tank Rand published a survey showing that 62 percent of teenagers openly admit to letting Artificial Intelligence complete their schoolwork. The topic of schools and AI is close to Timothy Cook's heart. He is a teacher himself, founded the "Connected Classroom" initiative, which researches the influence of AI on learning, and writes a column on the subject for Psychology Today. But what is happening in schools is merely a symptom of a larger change.

Generative AI has embedded itself into people's daily lives faster than any previous technology. And it is penetrating deeper into areas that were previously reserved for human thought. It starts with people asking chatbots about every little thing instead of looking for solutions themselves. Newspaper articles are no longer read, but rather the AI is asked for a summary. And no one even has to put pen to paper anymore: every seventh biomedical study, every fifth customer complaint, and every fourth corporate press release is now written with AI. At the very least.

If we delegate so many tasks that actually require thinking to machines—does the machine then also influence our thinking? Does it perhaps even restrict it, just like the variety of homework assignments in classrooms has dwindled? "How can we still have a functioning democracy if everyone—to put it cautiously—thinks the same thing?" asks Timothy Cook. He and other experts are working on ideas for how to protect one's mind from AI.

To understand this, one must first know the technology's influence on thought. One of the first studies to address this question was by Nataliya Kosmyna. The computer scientist from the MIT Media Lab had 54 students write and measured their brain activity via electrodes on the scalp. One group of students was allowed to use ChatGPT for help. The brains of the group without AI help showed a significantly more extensive networking between different brain regions than those of the ChatGPT group. Furthermore, the participants who did not use AI could remember their own essays better.

The study was not peer-reviewed—but it made waves. From Forbes to The Economist, various media outlets asked the question: Is ChatGPT making us stupid? Kosmyna emphasized that her study did not answer this question. She had only a few participants, and stronger networking in the brain is not inherently good or bad: "It could be a sign that someone is intensely engaged with a task—or an indication of inefficient thinking," she told Nature magazine. However, it seems clear that using AI does something to the mind. But what?

Michael Gerlich, a professor of economics at the Swiss Business School in Zurich, also investigated this. In a non-representative survey published in the journal Societies, he asked 666 people about their use of and attitude toward AI. According to the study, the more intensely AI was used, the less critical the respondents were. This means: those who used AI particularly intensively questioned its results less often. The effect was greater the more willing the respondents were to delegate cognitive tasks to Artificial Intelligence: "Participants who outsourced their cognitive tasks to a higher degree through the use of AI tools showed lower abilities for critical thinking," Gerlich writes.

This mental outsourcing is a double-edged sword. Using AI to find an answer quickly can free up resources in the head. On the other hand, however, one learns skills less effectively if they are outsourced. Consequently, one achieves better results thanks to the AI—but restricts oneself in the long term. A study from Turkey shows that math students come up with better solutions to problems using an AI than without it—but perform worse in exams without any help than classmates who had no access to AI while learning. A study by the company Anthropic revealed that programmers master new tasks faster with AI support—but understood the resulting program code less and were less able to check for errors than their colleagues who did not use AI.

For Timothy Cook, studies like these prove a danger to the mental development of children: adults can decide for themselves which skills they outsource to an AI. But for children and adolescents, the technology takes away the opportunity to develop skills in the first place. "To learn something, you need friction and effort," he says. AI, however, eliminates friction; it is designed to answer questions at a level that is understandable and satisfying to the user. Zurich researcher Gerlich goes a step further. He senses a danger for society: "If society generally thinks less critically, then it is also more easily influenced."

It is also true, however, that these studies do not prove causality. Does AI reduce critical thinking, or do less critical people tend to use the technology? The studies are also only snapshots that do not reveal whether the use of AI really has long-term consequences for the development of skills. Therefore, not all experts are as worried as Cook and Gerlich. Yes, it is possible that one learns less when using AI, says psychologist Markus Appel from the University of Würzburg. This is due to speed. If you have a text summarized by AI without reading it yourself, you spend less time with it. "There is less opportunity for learning-conducive processes to take place." But critical thinking is not in danger because of this, he says. It would merely shift. "I believe that simple things will be delegated much more," he says. For example, searching for information. Humans would then have to take over the higher-level mental processes. "The handling of information could even be strengthened," he says.

The key point is therefore not the question of whether one outsources mental processes to the AI, but which ones. Here, a mixed picture emerges. A year ago, Anthropic asked students what they used chatbots for. According to the survey, only five percent use them to evaluate information. Far more used AI for other, quite complex thought processes: 40 percent let the chatbot develop ideas, 30 percent used it to analyze information. A study by researchers from China and Australia, published in the British Journal of Educational Technology, also showed: students who researched with AI tended to check and question their work less often. The authors call this "metacognitive laziness."

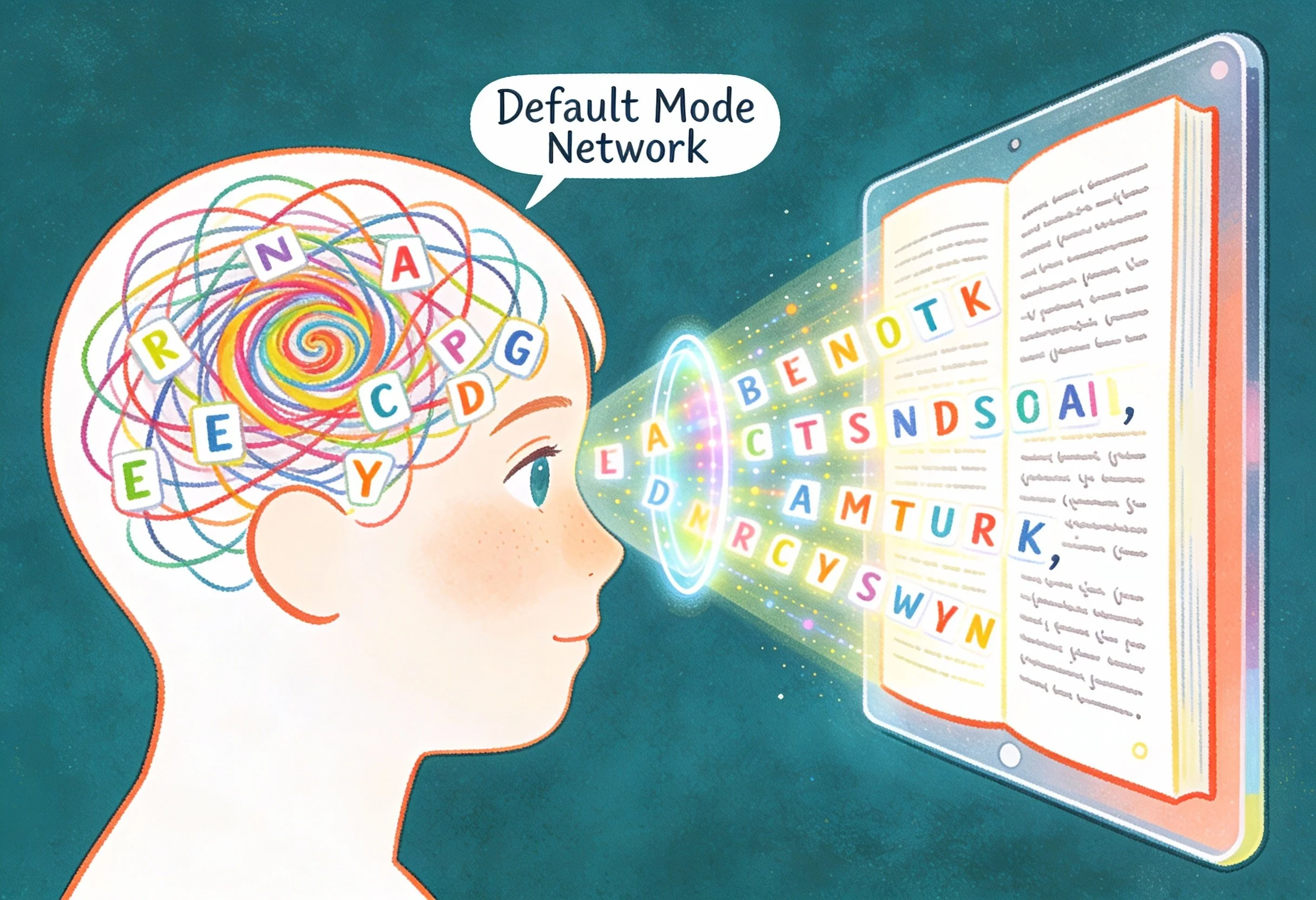

Using AI to get ideas could be a problem. This is where the difference from previous technologies lies: while writing itself or search engines primarily made it easier to store and find information, "language models act as co-thinkers that participate in writing and problem-solving," writes computer scientist Zhivar Sourati from the University of Southern California in the magazine Trends in Cognitive Sciences. Against the assumption that humans critically check AI results is the so-called "anchoring effect," according to which the first piece of information one receives has the greatest influence on thinking. If you ask AI for help, the first answer is the AI-generated answer. In another study by Zurich researcher Michael Gerlich, test subjects were asked to write essays. Those who were allowed to ask the AI for an initial assessment of the topic used its answer as a starting point for their thoughts. In subsequent interviews described in Data magazine, it was revealed: they were not even aware of this influence of the AI.

Viewed socially, this can lead to homogenization, argues Zhivar Sourati. After all, AI systems are based on analyzing the statistical regularities of a language in gigantic amounts of text. "On a large scale, what begins as statistical pattern learning becomes a generative force that favors central tendencies, while rare forms of expression, alternative ways of thinking, and culture-specific voices are pushed to the margins." This influence is not limited to what the AI produces. "It shapes our thoughts," says Sourati.

A study in the journal Science Advances shows, for example, that AI supports individuals in creative writing, but the resulting stories of different people resemble each other more than if no AI had been used. Another paper in Scientific Reports shows that AI can write more creatively on average than humans—but does not reach the performance of particularly creative individuals. Experts like Sourati or Gerlich therefore fear that a society that relies too heavily on AI will lose extraordinary voices. Public opinion would narrow to one perspective, just as essays in classrooms do. This perspective is steered by a handful of AI companies.

As long as there are no long-term studies, it is debatable how realistic this scenario is. But how can, and how should, one counter the risk? Research is being done into personalizing AI more so that it helps the individual user find their own voice. But Sourati is skeptical. "The models have largely identical training data." Subsequent personalization could achieve little there. Moreover, the problem remains that one becomes dependent on the AI in one's thoughts.

Another approach relies on active choice: which mental tasks do you want to outsource to the machine and which not? In Michael Gerlich's Data study, there were participants who were only allowed to use the AI to a limited extent. They first had to formulate their essay themselves, then they were allowed to research further information with AI help, improve their essay, and finally give the almost finished product to the AI one more time for review. This type of collaboration strengthened critical thinking instead of weakening it.

Can a model for AI use be derived from this? "Systematic interruptions must be built into the process, thanks to which humans have room for their own reflection," says Gerlich. Furthermore, the human thought must stand at the beginning of the work; the AI should only be used afterwards, as a research tool or mental "sparring partner."

Of course, schools could try to restrict the AI use of their students in this way, and companies could also set such boundaries for their employees. But ultimately, it will come down to the individual and the question of how they use the technology. How can they be convinced? Zhivar Sourati believes one would have to show what is at stake. "Different people and different voices and perspectives are what a society needs to solve problems." One must talk more about it.

Thank you for caring." For ICT Educator Achille Kalinda, a first-grader's words revealed the true power of EdTech. In this piece, he explores why multimodal storytelling is essential for helping six-year-olds discover they have something worth saying when writing alone creates barriers.