The Subtle Homogenization of the Mind

People have different skills with AI. There's good, bad, and ugly.

I get emails that are obviously ChatGPT. Four paragraphs and bullet points where two sentences would have sufficed. I receive documents for upcoming meetings or tasks that are so generic they were clearly fed through an LLM. I read LinkedIn posts that make no claims in 500 words of perfectly structured prose.

These interactions create an physical aversion. The sender spent thirty seconds generating the message. I spend five minutes navigating it. The asymmetry is insulting. They used AI to appear unnecessarily competent at the receivers expense.

So now, when I read anything through a screen, my first thought is: was this AI-assisted?

I read Michaeleen Doucleff's piece in The Atlantic last week, "The Dieting Myth That Just Won't Die." Well-crafted. Clear structure. Hook, method, result, implication. Seamless transitions. Phrases like "the findings were dramatic" and "from these data, researchers devised a bold new theory."

My first thought: was this AI-assisted?

I couldn't tell. And I've been thinking about AI and writing for over a year now. I've written about it extensively. I'm not accusing Doucleff of anything. That's the point. The question itself has become unanswerable. Whether AI is used or not bears no judgement.

So where do we draw the line. I wrote this newsletter with AI recommendations. The ideas are mine. The observations are mine. Some prose was assisted with an LLM. So who am I to scrutinize anyone?

I sat with that hypocrisy for a while. And I've come to believe the hypocrisy is the point.

Professional writing was already optimized for the patterns AI reproduces. Academic prose. Magazine prose. Consulting prose. The formulas existed before LLMs arrived. Hook, method, result, implication. That structure wasn't invented by AI. It was absorbed by AI models because it dominated the training data. The training data was human writing.

So when I read polished, well-structured prose and wonder if it's AI-assisted, I'm really asking: does this follow the conventions of professional writing? The answer is almost always yes. That's what professional writing means.

The Atlantic article could be entirely human written and still follow the exact patterns an LLM would produce. Because the LLM learned those patterns from articles like it.

I've come to the conclusion to stop asking whether writing is AI-assisted. That musing leads nowhere. But it does matter, especially for the people we trust. Because while competent writing in just that, I know wonder does the person understand what they wrote? Can they defend it without LLM in the room?

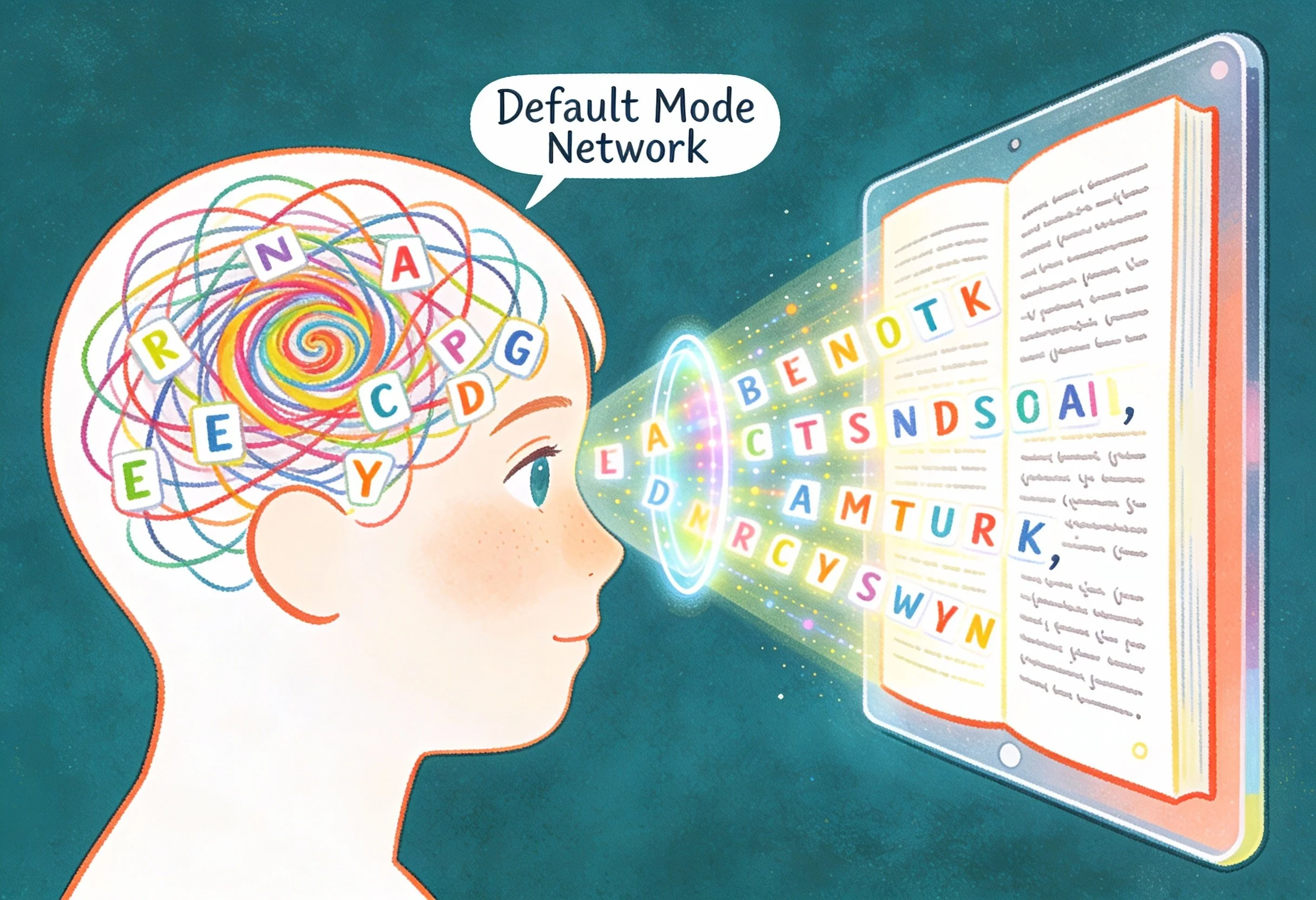

I just published an article in Psychology Today called AI Is Quietly Colonizing How You Think. Colonization follows a pattern. The colonizer arrives, learns the local habitse, codifies it, and modifies it back as the "correct" version. Over time, the colonized population begins speaking and thinking in structures that originated elsewhere and calling them native. The imported architecture becomes invisible because it feels familiar.

This is what LLMs are doing to human thought. Writing used to be a reliable signal of thinking. If you could produce a coherent argument on paper, you probably understood it. The friction of composition (struggling with a sentence that won't come together, realizing a paragraph doesn't follow, hitting a transition you can't earn) forced you to confront gaps in your reasoning. The writing was hard because the thinking was incomplete.

That friction is gone. LLMs produce smooth prose from rough ideas. You read the output, recognize your own ideas reflected back, verify it a 'correct', and call it a day.

I've done this. I've read an AI-assisted draft of my own work and thought, "Yes, that captures what I meant." Later, I've tried to explain the argument out loud and stumbled at exactly the points where the LLM made a decision that was never mine. The prose was smooth. The reasoning wasn't. I just couldn't tell from reading.

Recognition feels like verification. It isn't.

This is the colonial mechanism operating at the cognitive level. The LLM mirrors your ideas in a structure you didn't build. You see your raw material inside imported architecture. The architecture feels natural because it resembles professional conventions you already respected. So you approve it. You call it yours. You stop noticing that the sequencing of ideas, the emphasis, the way the argument resolves, none of those choices were yours. They were the AI's. You just agreed with them.

I was recently reading a beautifully written piece by Taran Rampersad titled Why AI Writing Converges: The Adjacent Possible of Language Models. He explains in plain English, how this phenomenon actually works.

What enters the model strongly influences where those attractors form. Language models reflect patterns already present in their training data. When inaccurate assumptions, distorted narratives, or collective biases appear frequently in the data, those patterns can also become statistical attractors. In that sense, the phenomenon resembles a form of DIDO — delusion in, delusion out — where flawed inputs propagate through the system and reappear as plausible outputs.

LLMs trend toward consensus. They hedge. They present both sides. They produce structurally sound arguments that say nothing bold. Left to their own devices, they would be defensive, not provocative.

Humans trend toward conviction. Partiality. Overreach. Conspiracy. A flawed but bold argument is harder for an LLM to produce than a sound but boring one.

So paradoxically, the logical gaps in human writing might be evidence of cognitive sovereignty. The places where a writer pushes too hard, where the framing stretches beyond what the evidence supports, where the counterargument goes unaddressed. Those are the places the LLM would have smoothed over.

The flaws are the fingerprint. The overreach is resistance. The parts of your thinking that refuse to conform to the training distribution are exactly the parts worth reading.

I'm not sure what to do with this observation except to take it seriously. It suggests that the most "human" writing is also the most flawed. The most defensible writing is the most machine-like. The credibility signals have inverted.

But I've come to believe the tension is productive. I see the homogenization happening in my own work, in Doucleff's Atlantic piece, in professional networks and ideas that blur into the next. I feel the temptation to let recognition substitute for verification. I catch (but not always) myself absorbing LLM reasoning patterns and mistaking them for my own insights.

The difference, if there is one, is that I'm trying to notice. And I'm putting my ideas in formats where the proof can't be faked. Podcasts where I have to respond in real time. Webinars where people question. Presentations where the thinking has to happen live.

This in not a broad solution. It's personal practice. And maybe that practice is all that separates my own thinking from colonized thinking at this point. I use tools, sure, but can I still function without them?

Whether I'm succeeding is not for me to judge. That's the point.

References

Center for Democracy & Technology. (2023). Off task: EdTech threats to student privacy and equity in the age of AI. https://cdt.org/insights/report-off-task-edtech-threats-to-student-privacy-and-equity-in-the-age-of-ai/

Gopnik, A. (2016). The gardener and the carpenter: What the new science of child development tells us about the relationship between parents and children. Farrar, Straus and Giroux.

Ehmke, R. (2026, January 16). How using social media affects teenagers. Child Mind Institute. https://childmind.org/article/how-using-social-media-affects-teenagers/

Thank you for caring." For ICT Educator Achille Kalinda, a first-grader's words revealed the true power of EdTech. In this piece, he explores why multimodal storytelling is essential for helping six-year-olds discover they have something worth saying when writing alone creates barriers.