The App Saw You Delete That

You record a video. You watch it back. You hate how you look. You delete it.

You think that moment is gone. Private. Just between you and your phone.

It's not.

That deleted video, the one you made at 10 PM when you were feeling insecure about yourself, the one you watched three times before deciding it was too embarrassing to post, is not a secret. That video taught the app something about you. Not just that you recorded it. But that you hesitated. That you watched it multiple times. And then you deleted it.

The app saw your insecurity before you even named it yourself.

Your "delete" key is no longer a privacy tool. It is a behavioral signal.

Most people don't realize that major video-sharing and social media platforms don't just collect what you post. They collect what you almost posted. The drafts you abandoned. The caption you typed and erased. The search you started and never finished. The twelve seconds you paused before deciding not to send that message. This is called pre-upload scanning. It means the platform is watching you think.

When you draft a message, delete it, rewrite it, and delete it again the pattern becomes data. When you start typing a search about something you're worried about and then abandon it…that hesitation gets recorded. When you record five takes of the same video because you don't like how you look in any of them you are providing behavioral data about your confidence, your insecurities, the gap between how you see yourself and how you want to be seen.

The content you chose not to share often reveals more about you than the content you did share. A published post shows what you're willing to defend publicly. A deleted draft shows what you're actually thinking.

I'm a teacher. I've spent fifteen years watching young people figure out who they are. That process of learning about yourself requires privacy. Figuring out your identity means trying on different versions of yourself. It means having thoughts you're not sure about yet. It means asking questions you'd be embarrassed to ask out loud. It means being confused, being wrong, changing your mind. That's not weakness. That's development. That's literally how you become a person. And it’s all perfectly okay.

But this process only works if you have space to do it without being watched. Everyone that’s had a child or been a child understands the importance of needing room to experiment with ideas, aesthetics, beliefs, and identities without those experiments becoming permanent records that can be viewed for the rest of your life. Previous generations could reinvent themselves. You could be awkward in middle school and become someone completely different in college. Your mistakes didn't follow you. They were never recorded and stored in digital space.

That's disappearing. When every deleted draft is captured, when every hesitation is logged, when every abandoned search becomes part of your behavioral profile you lose the space to figure things out privately. The "fresh start" that previous generations took for granted doesn't exist anymore.

That 15-year-old who deleted his shirtless video because he hated how his body looked? The platform didn't just capture that he deleted it. The platform learned that he has body image insecurity. And now it knows exactly what content will keep him scrolling — fitness influencers with bodies he'll never have, content that makes his lack of progress feel like failure, and ads for supplements promising to fix what he's ashamed of.

The algorithm learned his vulnerability from the thing he tried to hide. And it will use that knowledge to show him content designed to keep him engaged by keeping him insecure. This isn't a glitch. This is the business model. Platforms make money by keeping you on the app. Insecurity keeps you scrolling. So does anxiety. So does the feeling that everyone else has it figured out except you. The algorithm doesn't care whether you develop a healthy relationship with your body. It cares whether you keep generating engagement.

Your private moment of vulnerability becomes a data point used to target you with content calibrated to that exact vulnerability. And unfortunately, it’s not just social media. In schools across the country, maybe even your own, monitoring software scans everything students write on school devices. This includes private documents, emails, and even things you never shared with anyone.

If a 13-year-old girl writes a private journal entry in Google Docs exploring questions about her sexuality, she's not intending to share it. She's just thinking through writing, the way people have always processed difficult questions. But now the monitoring software flags the entry because she wrote the word lesbian. Her parents get notified.

This child, at only 13, learns a devastating lesson that thinking is dangerous. Self-reflection has consequences. The tool meant for learning becomes a trap for her thoughts. And her experience isn't a fringe case. According to a survey by the Center for Democracy & Technology, 29% of LGBTQ+ students reported they or someone they knew had been "outed" to an adult due to school surveillance software.

Twenty-nine percent! This is what surveillance does to a developing mind. It doesn't just watch behavior. It changes the relationship you have with your own thinking. When you know your thoughts might be monitored, you start policing yourself. You stop exploring. You perform a version of yourself that's safe for invisible audiences instead of actually figuring out who you are.

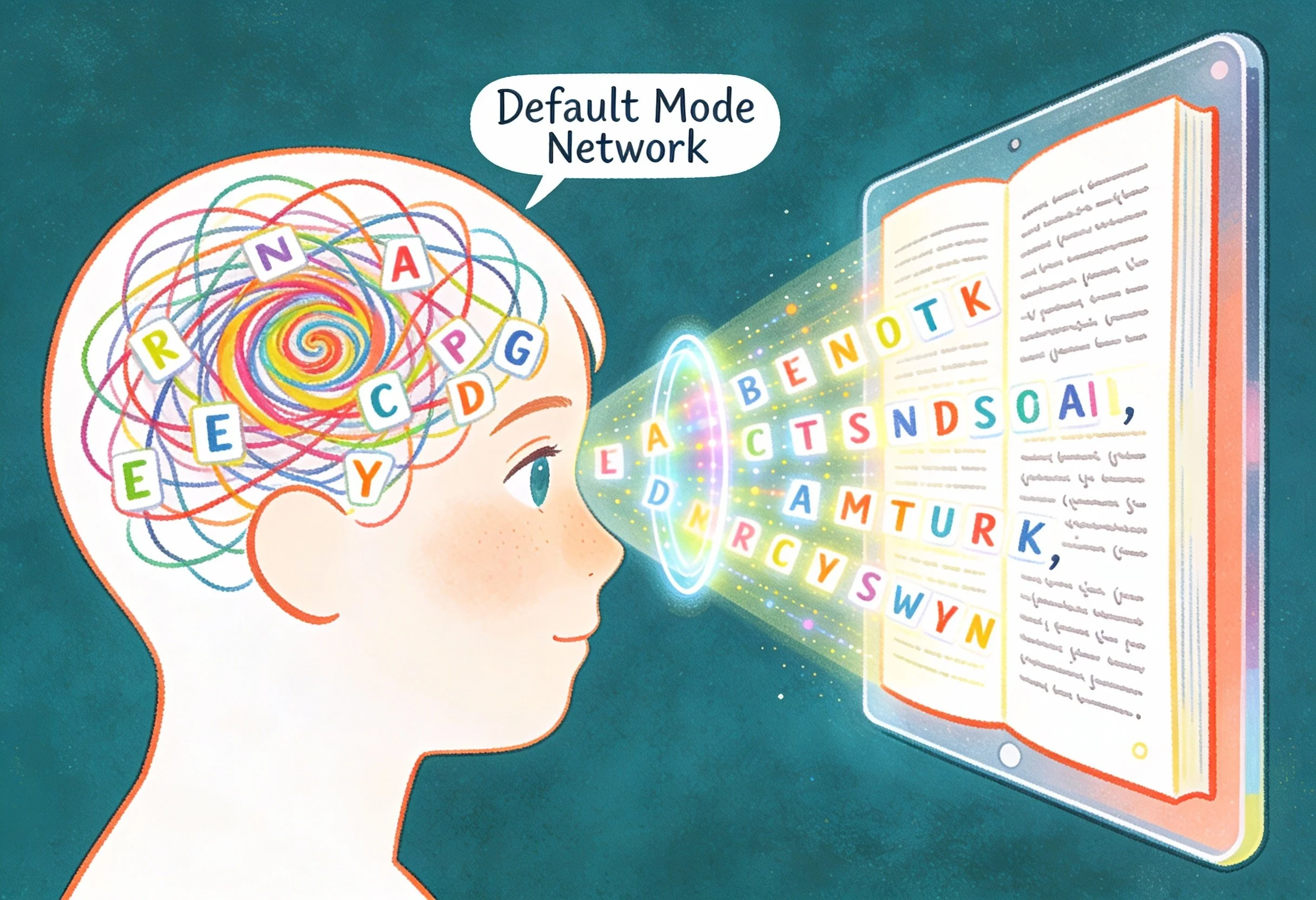

There is a psychological theory about developing self called Self-determination theory. It identifies three things humans need to develop motivation and a stable sense of self: autonomy (feeling like your choices are your own), competence (feeling capable), and relatedness (feeling connected to others).

Surveillance attacks autonomy directly. When you're being watched, you're not thinking freely but you're thinking under observation. That's a completely different psychological experience. Your mind shifts from "What do I actually believe about this?" to "What is safe to express about this?"

When surveillance becomes the permanent condition of thinking, you stop asking questions you're unsure about. You stop exploring ideas that might look bad. You learn to masquerade as already having arrived instead of engaging in the messy process of actually becoming someone. That's not a side effect of these systems. That's what surveillance functionally does.

The unfortunate truth is you're children are being asked to grow up in a glass house where the performance never ends. Unlike any generation before, the developmental mistakes children make now are captured, profiled, and potentially permanent. Add in the push for real-ID verification means every curiosity, every abandoned experiment in self-presentation, gets tied to your legal identity. Data fusion means your social media behavior can be correlated with your location, your purchases, your school records.

This isn’t only a loss of privacy. Its also losing the developmental space where identity formation actually happens. And it isn’t the kids’ fault. They didn't build these systems. They didn't choose to have their thinking monitored. But they'll be living with the consequences.

I'm not going to tell you to delete all your apps. I know that's not realistic. But I want you to understand what's happening so you can make informed choices. If there’s anything to take away from this article it’s the following:

Know that "delete" doesn't mean gone. The content you create and delete still becomes data. The hesitation, the revision, the abandonment can all be captured. Think about that before you create.

Find spaces that aren't surveilled. Write in a physical notebook sometimes. Have conversations in person. Let yourself think without a device present. You need practice having thoughts that don't become data.

Recognize when you're being targeted. If you notice that the content in your feed consistently makes you feel inadequate, insecure, or anxious you should recognize it’s not random. The algorithm has learned your vulnerabilities and is exploiting them. You can choose not to engage.

Understand the business model. You're not the customer. You're the product. The platform's goal isn't your wellbeing but your engagement. Knowing this doesn't make you immune, but it helps you see the game.

Talk about this. Most people don't know that deleted content isn't private, and that their posting and deletion patterns are tracked. Sharing this knowledge with others is itself a form of resistance.

We must not forget that adults built these systems. Adults allowed them to be deployed on children. And adults do have the power to change this.

Parents need to understand that their kids aren't being dramatic when they say they feel constantly watched. They are constantly watched. That's the reality that’s been standardized across social media. Schools need to reconsider whether surveillance software that monitors students is actually protecting students or just teaching them that thinking is dangerous. Lawmakers need to recognize that cognitive privacy is a fundamental requirement for human development. We protect children from exploitation in every other domain. We should protect their minds too.

What I hope you take away from this is that the capacity to think privately, to make mistakes without permanent records, to figure out who you are without algorithmic observation isn’t a luxury but a developmental necessity.

You have the human rights to that privacy.

All children deserve the same opportunity the previous generations had. They deserve the chance to grow up with room to experiment, to be confused, to change their mind, to become someone different than who they are right now. That opportunity is being stolen from, and most adults don't even realize it's happening.

Now you know. What you do with that knowledge is up to you.

Thank you for caring." For ICT Educator Achille Kalinda, a first-grader's words revealed the true power of EdTech. In this piece, he explores why multimodal storytelling is essential for helping six-year-olds discover they have something worth saying when writing alone creates barriers.