Making Human Capability Visible

Competency-based education isn't new. Its roots trace back decades, through mastery learning, outcomes-based education, and various reform movements. It has passionate advocates and proven implementations. It also has critics who worry about standardization, measurement challenges, and implementation complexity.

What's new is the context. When AI can produce any output a compliance-based system might require, the question of what credentials actually certify becomes unavoidable. A student's transcript can document four years of submitted assignments while revealing nothing about their ability to think, write, or communicate without technological scaffolding.

This is the situation facing every school, every teacher, every student right now. The old system worked (sort of) when producing good outputs required developing good capabilities. That connection has been severed. We need assessment systems that directly evaluate what students can do, not what they can produce.

The Capabilities That Require You

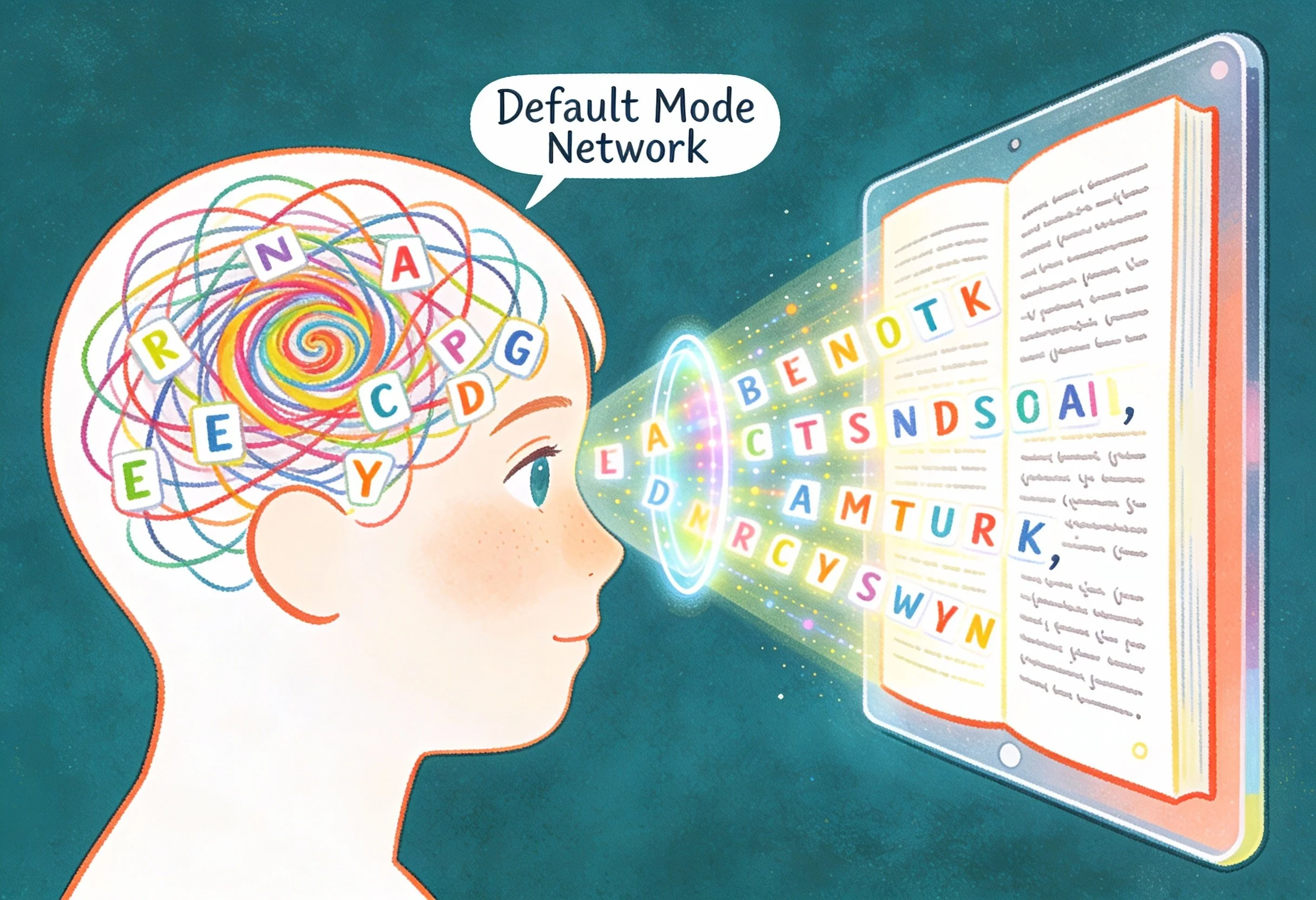

Not all competencies are equally relevant to the AI challenge. Some capabilities transfer easily to machines. Recall of factual information. Organization of existing knowledge. Production of grammatically correct prose. Computation of numerical problems. These were never the deepest goals of education, but they were often what got assessed because they were easy to measure.

Other capabilities resist transfer. Moral reasoning under genuine uncertainty. Integration of lived experience with abstract concepts. Real-time adaptation to unexpected challenges. Creative synthesis across subject area domains. Authentic connection with other humans. These have always been central to what education should develop, but often what got neglected because they were hard to measure.

AI hasn't changed which capabilities matter. It just made the measurement problem impossible to ignore. If we assess recall, AI will recall better. If we assess organization, AI will organize better. If we assess polish, AI will polish better. Any competency that can be demonstrated through a static artifact is vulnerable to substitution.

The competencies that matter now are the ones that require human presence, human context, human judgment, human relationship. Not because humans are inherently superior, but because these capabilities can only be demonstrated by the specific human who possesses them.

Research on competency frameworks from UNESCO's AI Competency Framework for Teachers to various national qualification systems converges on three categories:

Thinking that requires your judgment. Critical analysis, creative synthesis, adaptive problem-solving, metacognitive awareness. These capabilities can be augmented by AI but not replaced by it, because they require the human to evaluate, select, and integrate in ways that depend on specific purposes and situations.

Connection that requires your presence. Empathy, collaboration, communication, relationship-building. AI can simulate these capabilities in narrow contexts, but authentic human connection requires presence, responsiveness, and the kind of mutual vulnerability that only humans can offer each other.

Decisions that require your judgment. Recognizing ethical dimensions of situations, weighing competing values, taking responsibility for choices, acting with integrity even under pressure. AI can analyze and even model ethicals, but it cannot take moral responsibility for decisions. That weight falls on humans alone.

Same Skill, Different Design

I don’t believe there’s a reason to ban AI or catch cheaters. Just design assessments where human capability becomes visible and where the task itself requires the student to be present, thinking, and accountable.

Here's what that looks like in practice with two examples:

Persuasive Writing (English/Social Studies)

Compliance version: Write a persuasive essay arguing for or against a school uniform policy. Include a clear thesis, three supporting arguments, and a conclusion. Use at least three credible sources.

All this does is measure compliance with essay format, source integration, argument structure. AI handles all of it effortlesslyso the credential certifies nothing.

Competency version: Your school board is voting on a uniform policy next month. They've asked for student input. Prepare a three-minute oral argument to deliver at the board meeting, where you'll face questions from board members. Your argument must include: (1) a clear position, (2) evidence drawn from your own experience at this school, and (3) a direct response to the strongest counterargument. You'll submit a video of yourself delivering the argument, followed by a live Q&A session with your teacher playing the role of a skeptical board member.

We’ve now moved deeper into persuasive argumentation where the student must appear on video, draw on personal context, and respond in real time to unanticipated questions. Substitution is architecturally impossible.

Scientific Reasoning (Science)

Compliance version: Write a lab report on your pendulum experiment. Include hypothesis, procedure, data table, analysis, and conclusion.

This measures format compliance and data presentation. While an important component of recording the scientific process, AI can generate this from minimal input from the user.

Competency version: Your pendulum experiment produced unexpected results. In a 5-minute oral defense, explain:

(1) what you expected to happen and why

(2) what actually happened

3) at least two possible explanations for the discrepancy

(4) how you would redesign the experiment to test which explanation is correct

Your teacher will interrupt with questions. You may use your data, but no notes on what to say.

This becomes clear scientific reasoning under uncertainty that necessitates real-time problem-solving and the ability to think through unexpected results. The student must reason live, not recite.

The Design Principles

Across these examples, several principles emerge:

Require real-time demonstration. Oral defense, live Q&A, and in-class problem-solving make substitution impossible because AI can't show up and think on the student's behalf.

Integrate personal context. When students must draw on their own experiences, observations, or local knowledge, they're accessing material AI doesn't have.

Demand metacognition. Asking students to reflect on their thinking process (what they'd do differently andwhy they made certain choices) shows understanding that can't be faked.

Build in unpredictability. Teacher questions, unexpected problems, and real-time challenges require adaptive thinking rather than rehearsed performance.

Make the human visible. Video submissions, oral presentations, and live interactions put the student's presence at the center of the assessment.

What Happens If We Don't

Schools and universities will continue issuing credentials that certify no differientation between human capability and AI output. Students graduate with transcripts full of AI-assistance and heads empty of tranferable capabilities. Employers lose faith in educational credentials entirely. The students who actually developed genuine abilities become indistinguishable from those who gamed the system.

And the students who never learned to think keep showing up at college, sitting in advisor offices, realizing for the first time that they can't do what their credentials claim.

The system that produced them is still running in most schools. The alternative exists. We need the institutional will to build it.

Thank you for caring." For ICT Educator Achille Kalinda, a first-grader's words revealed the true power of EdTech. In this piece, he explores why multimodal storytelling is essential for helping six-year-olds discover they have something worth saying when writing alone creates barriers.